Member-only story

Unlocking the Secrets of Transformer Architecture: The Powerhouse Behind Modern AI

Imagine asking your smartphone’s virtual assistant a complex question, and within seconds, receiving a coherent, well-structured response. This seamless interaction is made possible by the Transformer architecture — a revolutionary framework that has become the backbone of modern AI systems.

From language translation to chatbots, the power of Transformers is reshaping our digital experiences, making them more intuitive and responsive.

What is Transformer Architecture?

The Transformer architecture was introduced in the seminal paper “Attention Is All You Need” by Vaswani et al. in 2017. It represented a significant departure from previous sequential models like RNNs and LSTMs by leveraging a self-attention mechanism that processes input data in parallel, making it highly efficient and scalable.

Key Innovations:

- Self-Attention Mechanism: Focuses on relevant parts of the input sequence, enabling better context understanding.

- Parallel Processing: Unlike sequential models, Transformers process entire sequences at once, drastically reducing training times.

Key Components of Transformer Architecture

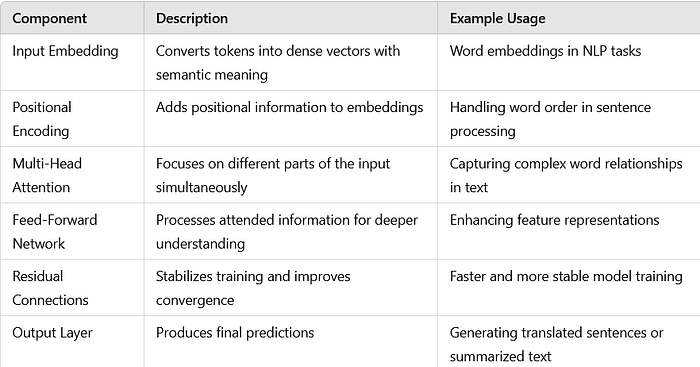

The Transformer architecture is composed of several key components that work together to process and generate data efficiently. Here’s a detailed breakdown:

1. Input Embedding:

Transformers start by converting input tokens (e.g., words or characters) into dense vector representations. These embeddings capture semantic meanings and relationships between tokens, enabling the model to understand and process language more effectively.

2. Positional Encoding:

Unlike traditional RNNs, Transformers do not inherently understand the order of tokens. Positional encoding solves this by adding unique positional information to each token embedding. This helps the model distinguish between different positions in the sequence, preserving the context and…